Achievement

ORNL researchers proposed a new machine learning (ML)-based framework to detect advanced cyberattacks in modern vehicles. In a recent study accepted for publication at the Fourth International Workshop on Automotive and Autonomous Vehicle Security (Autosec), a team from ORNL introduced a forensic framework for the detection of masquerade attacks in the Controller Area Network (CAN) bus of modern vehicles.

Modern vehicles are complex cyber-physical systems containing up to hundreds of electronic control units (ECUs). ECUs are embedded computers that communicate over a (few) CANs to help control vehicle functionality, including acceleration, braking, steering, and engine status, among others. CANS are susceptible to cyber attacks of different levels of sophistication. Fabrication attacks are the easiest to administer---an adversary simply sends (extra) frames on a CAN---but also the easiest to detect because they disrupt frame frequency. To overcome time-based detection methods, adversaries must administer masquerade attacks by sending frames in lieu of (and therefore at the expected time of) benign frames but with malicious information. CAN information is encoded using hundreds of time series (signals) representing the state of the vehicle. Research efforts have proven that CAN attacks, and masquerade attacks in particular, can affect vehicle functionality. Examples include causing unintended acceleration, deactivation of vehicle’s brakes, as well as steering the vehicle.

Significance and Impact

The widespread dependence of modern vehicles on CANs, combined with the security vulnerabilities has been meet with a push to develop intrusion detection systems (IDSs) for CAN. Generally, there are two types of IDSs methods: signature and machine learning (ML). Signature-based methods rely on a predefined set of rules for attack conditions. Behavior that matches the expected signature is regarded as an attack. However, given the heterogeneous nature of the CAN bus in terms of transmission rates and broadcasting, effective rules for detecting attacks are difficult to design, which contributes to high rates of false negatives.

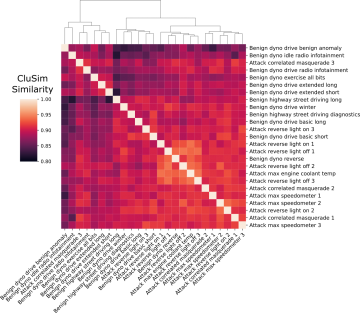

In contrast, ML-based methods profile benign behavior to identify anomalies or generalized attack patterns when the traffic does not behave as expected. Detecting subtle masquerade attacks requires analyzing the payload content because the correlation between certain signals may change when the frame content is modified during an attack; hence, analyzing translated signals is a promising avenue. Thus, considering the relationship between signals is important for achieving a more effective defense against advanced masquerade attacks.

Research Details

To detect masquerade attacks, ORNL researchers propose a forensic framework to decide if recorded CAN traffic contains masquerade attacks. The proposed framework works at the signal-level and leverages time series clustering similarity to arrive at statistical conclusions. In doing so, we use available and readable signal-level CAN traffic in benign and attack conditions to test our framework. The results obtained from our evaluation demonstrate the capability of the proposed framework to detect masquerade attacks in previously recorded CAN traffic with high accuracy.

To the best of our knowledge, the results from this research are the first to show systemic evidence of a forensic framework successfully detecting masquerade attacks based on time series clustering using a dataset of realistic and verified masquerade attacks.

Compared to existing detection methods that only detect deviations in frame's frequency, the ORNL researchers say that their method proved to detect advanced attacks in a previously collected CAN dataset with masquerade attacks (i.e., the ROAD dataset) and develop a forensic tool as a proof of concept to demonstrate the potential of the proposed approach for detecting CAN masquerade attacks.

Publication:

P. Moriano, R. A. Bridges, and, M. D. Iannacone. Detecting CAN Masquerade Attacks with Signal Clustering Similarity. Fourth Workshop on Automotive and Autonomous Vehicle Security (AutoSec), 2022. DOI: https://dx.doi.org/10.14722/autosec.2022.23028

Last Updated: April 29, 2022 - 8:55 am