Achievement

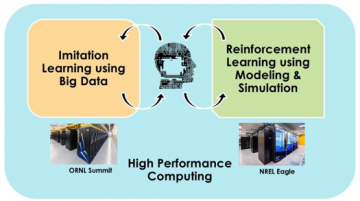

A team of researchers at Oak Ridge National Laboratory (ORNL) and the National Renewable Energy Laboratory (NREL) utilized a combination of conditional imitation learning with a static dataset, reinforcement learning with a simulation environment, and high-performance computing to train a neural network to drive a car. The researchers used a driving simulator (CARLA) to train a neural network to drive a car using imitation learning and reinforcement learning. Imitation learning is where the neural network learns from static data whereas reinforcement learning is where the neural network learns from interacting with the environment. They illustrated that this combined learning approach holds promise to create an artificial driver for vehicles. They also laid the groundwork for scaling this approach on ORNL’s Summit machine.

Significance and Impact

This work demonstrates an approach that can automate the design of deep neural networks for autonomous vehicles. This approach will lower the barrier of entry for creating intelligent control systems whose driving behavior is indistinguishable from humans.

Research Details

- Researchers developed a quantitative metric for evaluating the driving behavior of a control algorithm.

- Researchers showed that imitation learning from a static data combined with experiential interaction with an environment via reinforcement learning shows significant promise in creating effective driving algorithms.

- Researchers showed that an HPC system may significantly speed up the training process and reduce the time to solution.

Citation and DOI

Robert Patton, Shang Gao, Spencer Paulissen, Nicholas Haas, Brian Jewell, Xiangyu Zhang, Peter Graf. " Heterogeneous Machine Learning on High Performance Computing for End to End Driving of Autonomous Vehicles " Society of Automotive Engineers World Congress Experience 2020.

Overview

Current artificial intelligence techniques for end to end driving of autonomous vehicles typically rely on a single form of learning or training processes along with a corresponding dataset or simulation environment. Relatively speaking, success has been shown for a variety of learning modalities in which it can be shown that the machine can successfully “drive” a vehicle. However, the realm of real-world driving extends significantly beyond the realm of limited test environments for machine training. This creates an enormous gap in capability between these two realms. With their superior neural network structures and learning capabilities, humans can be easily trained within a short period of time to proceed from limited test environments to real world driving. For machines though, this gap is guarded by at least two challenges: 1) machine learning techniques remain brittle and unable to generalize to a wide range of scenarios, and 2) effective training data that enhances generalization and generates the desired driving behavior. Further, each challenge can be computationally intensive on its own thereby exasperating the gap. Moreover, is has not yet been shown that a single form of learning or training is capable of addressing a large range of scenarios. As a result, solving the first challenge does not inherently solve the second and vice versa. The work described here discusses an approach to address the first challenge that would also provide a foundation for solving the second. Our approach utilizes a combination of conditional imitation learning with a static dataset, reinforcement learning with a simulation environment, and high-performance computing to train a neural network. As a result, this reduces the “time to solution” from to the existing techniques for autonomous driving and provides an extensible framework to address the second key challenge.

Last Updated: January 14, 2021 - 8:50 pm