Achievement

Extended the SharP programming abstraction to support access hints/constraints, which, when composed with usage hints, enables data-centric computing, and demonstrated the utility of these extensions by adapting the petascale QMCPack application and Graph500 benchmark to a data-centric approach.

Significance and Impact

This work demonstrates the utility and productivity advantages of the SharP programming abstraction when adapting or developing applications with a data-centric approach.

Research Details

- Identified and motivated four areas of data-centric computing, which were used to analyze the petascale capable application QMCPack and the Graph500 benchmark.

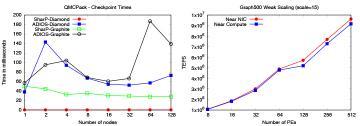

- Adapted QMCPack to leverage a data-centric approach with respect to (i) data resilience by using a flexible, lightweight checkpoint/restart mechanism and (ii) data locality by using contiguous data structures and near NIC communication resources.

- Adapted the Graph500 benchmark to a data-centric approach with respect to data locality by allocating the important data structures used for communication near the NIC.

- Evaluated and demonstrated the utility of our modifications on ORNL’s Titan system and found our data-centric approach reduced checkpoint times by up to 85% for QMCPack and improved the data intensive performance of Graph500 by up to 9.9%.

Overview

Extreme-scale applications (i.e., Big-Compute) are becoming increasingly data-intensive, i.e., producing and consuming increasingly large amounts of data. The HPC systems traditionally used for these applications are now used for Big-Data applications such as data analytics, social network analysis, machine learning, and genomics. As a consequence of these trends, the system architecture should be flexible and data-centric. This can already be witnessed in the pre-exascale systems with TBs of on-node hierarchical and heterogeneous memories, PBs of system memory, low-latency, high-throughput networks, and many threaded cores. As such, the pre-exascale systems suit the needs of both Big-Compute and Big-Data applications. Though the system architecture is flexible enough to support both Big-Compute and Big-Data, we argue there is a software gap. Particularly, we need data-centric abstractions to leverage the full potential of the system, i.e., there is a need for native support for data resilience, the ability to express data locality and affinity, mechanisms to reduce data movement, the ability to share data, and abstractions to express User’s data usage and data access patterns. In this paper, we (i) show the need for taking a holistic approach towards data-centric abstractions, (ii) show how these approaches were realized in the SHARed data-structure centric Programming abstraction (SharP) library, a data-structure centric programming abstraction, and (iii) apply these approaches to a variety of applications that demonstrate its usefulness. Particularly, we apply these approaches to QMCPack and the Graph500 benchmark and demonstrate the advantages of this approach on extreme-scale systems.

Last Updated: May 28, 2020 - 4:04 pm